The Rise of AI Music and the Struggle for Transparency

In mid-2025, frustration boiled over for Cedrik Sixtus, a software developer based in Leipzig. He noticed that his Spotify playlists were increasingly filled with tracks he suspected were generated by artificial intelligence. Determined to take action, he developed a tool called the Spotify AI Blocker. This tool automatically labels and blocks AI-generated music from his listening experience. He shared it on code-sharing websites, where hundreds of users downloaded it.

The Spotify AI Blocker filters out more than 4,700 suspected AI artists, drawing on community efforts and external detection tools. It identifies patterns such as unusually high release volumes and AI-style cover art. However, Sixtus warns that using his software may violate Spotify’s terms of service. His concerns are not isolated; they reflect a growing sentiment among Spotify users who are increasingly wary of AI-generated content.

A Growing Concern Among Users

On Spotify’s community forums, discussions about AI music have become heated. For some, the issue is that AI-generated music doesn’t sound right. Others simply don’t want to listen to music created by machines. In response, Spotify has taken some steps to address these concerns. In April, it launched a test feature that shows how an artist used AI in a song’s credits. However, this system relies on voluntary disclosure from artists, which raises questions about its effectiveness.

Spotify’s approach is far from comprehensive. While it acknowledges the need for industry-wide alignment, it hasn’t yet implemented a clear way for users to filter out AI-generated content. Robert Prey, a researcher at Oxford University’s Internet Institute, describes this as a difficult balancing act for Spotify. The platform must navigate the complex landscape of AI while maintaining trust among listeners, artists, and the broader industry.

The Impact of AI Music on the Industry

The rise of AI music tools like Suno and Udio has introduced both excitement and unease within the music world. These platforms can generate polished songs in seconds, complete with lyrics, vocals, and instrumentation. A recent controlled test by Deezer and Ipsos revealed that 97% of listeners failed to distinguish between AI-generated and human-made tracks. This blurring of lines raises concerns about the impact on human artists, as tens of thousands of AI tracks are uploaded daily to streaming platforms.

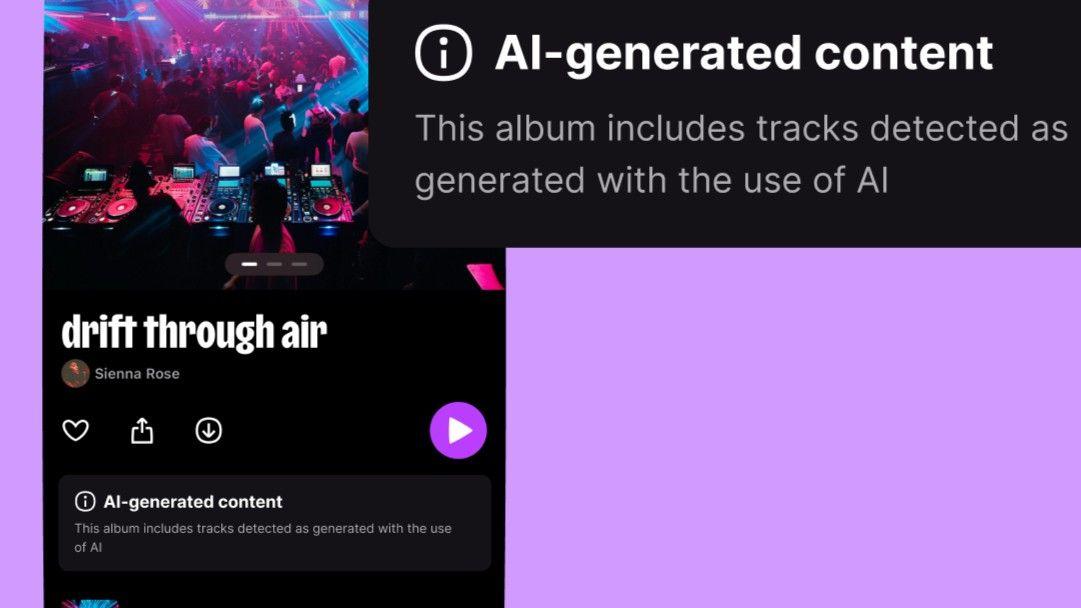

While Spotify, YouTube Music, and Amazon Music have avoided clear user-facing labels or filters for AI-generated music, there are signs that this may change. Deezer, a smaller competitor, has taken a stronger approach by tagging albums that contain AI-generated tracks and excluding them from algorithmic recommendations. Apple Music has also announced plans to introduce transparency tags, though critics question their reliability.

The Complexity of Labeling AI Music

Labeling AI-generated music is not a straightforward task. Maya Ackerman, an expert in AI and computational creativity, points out that AI music exists on a spectrum. Some tools produce music entirely from prompts, while others assist with specific parts of the creative process. This complexity makes it challenging to determine when a label is necessary.

Bob Sturm, a researcher at KTH Royal Institute of Technology in Sweden, highlights the technical challenges of accurately detecting AI-generated tracks. False positives could lead to unfair labeling of human musicians. As AI tools improve, detection systems must be continually retrained, leading to what Sturm calls an “AI music arms race.”

The Future of AI in Music

Despite these challenges, many believe that AI-generated music should be labeled. David Hoffman, a professor at Duke University, argues that platforms should at least label fully AI-generated tracks and assess the scale of the issue. Listeners, too, seem to support this idea. In the Deezer-Ipsos poll, around 80% of respondents said AI-generated music should be clearly labeled.

Economic factors may also play a role in Spotify’s reluctance to implement labeling and filtering. According to Robert Prey, Spotify is focused on platform growth, and keeping recommendation systems unencumbered helps achieve this. Detecting AI-generated content adds cost, and serving up AI music may be cheaper.

The Road Ahead

Past controversies have fueled suspicion about Spotify’s practices. The company has been accused of promoting lower-cost music for background-style playlists, which it denies. All tracks on its platform are delivered by third-party rightsholders, and the payment model is the same for all.

As the industry evolves, standards bodies like DDEX are working on a broad industry standard for AI disclosures in music credits. Under the EU AI Act, certain AI-generated content will be required to be labeled from August 2026. However, how Spotify will implement these rules remains unclear.

David Hesmondhalgh, a professor at the University of Leeds, describes the current state of AI music as the “Wild West.” However, he expects some kind of order to emerge, much like the evolution of the streaming industry from the early 2000s file-sharing panic.

Spotify appears to be recognizing the pressure, recently announcing features aimed at elevating human artistry, including SongDNA and “About the Song,” which give premium users deeper insight into a track’s origins.

“We believe the right response to AI in music isn’t any single policy, it’s a combination of proactive controls, industry-wide standards, and a deeper investment in the human creativity behind every track,” added the Spotify spokesperson.