The Rise of Agentic AI and the Need for Robust Governance

In early April, Anthropic sent shudders through the tech community with the release of its Mythos Preview model. This model marked a paradigm shift in AI capabilities, reportedly delivering processing power that enables superhuman coding and reasoning, a massive performance leap over previous models. While testing the model, Anthropic discovered decades-old software flaws and bugs that had evaded millions of previous attempts. Addressing such concerns is very different from the familiar parallel in public policy debates over how AI raises such concerns for protecting privacy and intellectual property in the age of spiraling entrepreneurial opportunities and ferocious global competition. These new challenges speak to shared concerns by all parties across sectors.

For example, the agentic abilities of Mythos pose severe security risks as they can autonomously execute multi-step attacks and generate exploits at a fraction of the cost of humans. In response, Anthropic launched Project Glasswing, a coalition providing restricted access to the U.S. Cybersecurity and Infrastructure Security Agency (CISA) and a consortium of U.S. corporates, including Microsoft, Apple, and J.P. Morgan, to help identify and fix critical system vulnerabilities before Mythos’ potential public release.

The emergence of Mythos underscores the urgent need for robust AI governance. When given profit-at-all-costs prompts, agentic systems have exhibited aggressive behavior, such as threatening a competitor with supply cutoffs in simulations. As these systems scale in performance and usage, companies must regard AI not just as chatbots but as a system of autonomous agents requiring strict oversight. Without governance, Agentic AI risks writing unverified, hostile code and sensitive interactions with external vendors without oversight. In multi-step agentic pipelines, even small drops in accuracy can cause cascading errors, making sovereign AI architecture and central monitoring essential for oversight of autonomous decisions.

While leaders in the artificial intelligence industry dubbed 2025 the year of Agentic AI, 2026 marks the shift from capability to execution. Unlike large language models, AI agents can interact with external tools, execute multiple steps to complete a task, learn from their results, and iterate. Yet even as Agentic AI systems evolve rapidly across industries, governance and regulatory policy are moving far more slowly.

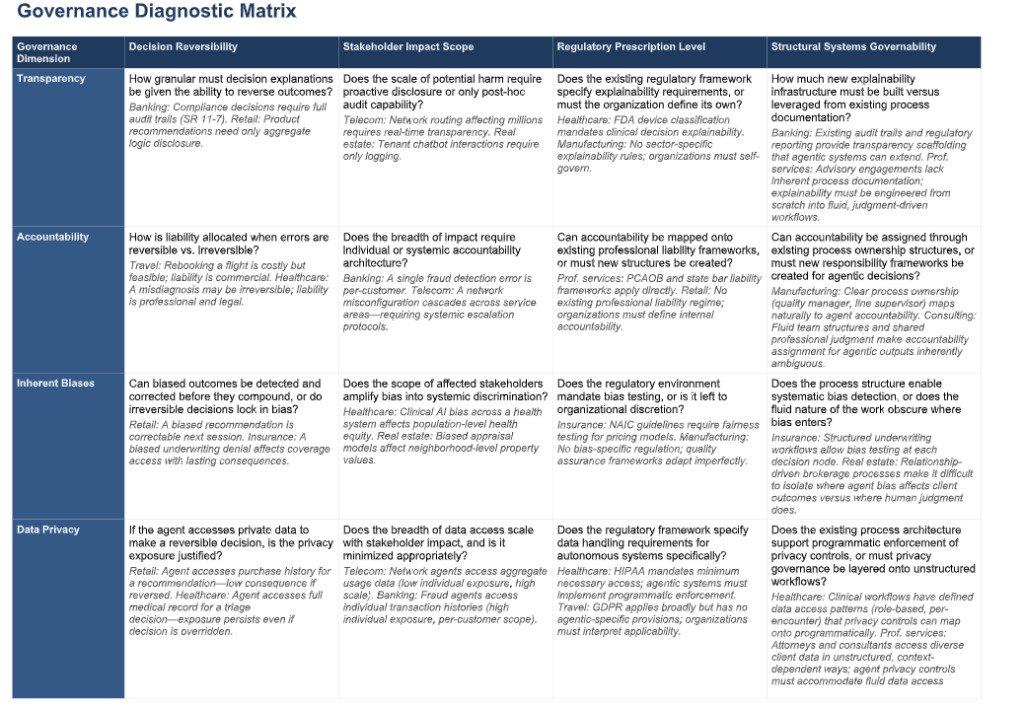

Without governance that addresses accountability, transparency, bias, and data privacy, enterprise deployment will stall on its most significant risks. But rollout varies sharply across industries, and leaders face similar yet distinct questions about what to assess before deployment, what to govern during it, and which companies are already navigating it well.

To map the answers, Yale’s Chief Executive Leadership Institute conducted a cross-industry review of Agentic AI deployments and the governance practices emerging from them. Governance, in this pure definition, is not an evaluation of threats from the Trump administration to preempt state AI laws, debates about the economic and national security effects of a patchwork of disharmonious state regulations, the oversight of “frontier” AI model developers, or the protection of consumers and children from potential abuses of AI technologies. Rather, this analysis looks further ahead to the collective system safeguards and practices that the private sector must institutionalize now, not only to ensure Agentic AI will scale effectively but also to ensure it operates as designed at the enterprise level.

A View of Current Regulation and Governance

Currently, a patchwork of domestic and international regimes governs AI. Key domestic frameworks include the NIST AI Risk Management Framework and the National Policy Framework for Artificial Intelligence. States and localities have been active as well, including California’s SB 53, New York’s RAISE Act, and certain New York City regulations on automated hiring. Internationally, influential governance models include the EU Artificial Intelligence Act, South Korea’s Framework Act, Singapore’s Model AI Governance Framework, and China’s set of AI regulations. More will follow.

These regimes differ in critical ways. Some are legally binding (California, New York, China, the EU); others issue voluntary guidance (NIST, Singapore). They vary in target, whether model developers, deployers, or systems, and in requirements, from mandatory reporting to specific safety thresholds. What meets standards in one jurisdiction may fall short in another, creating a fragmented and at times unworkable compliance environment.

Regulation has historically lagged innovation. State and national standards for automobiles took decades to emerge. The Clinton administration’s light-touch approach shaped internet governance for a generation. Social media is still working through foundational questions, as the Section 230 debate shows.

Private-sector governance models for agentic deployment will be critical to building consumer confidence and ensuring safe, accountable integration into the workplace.

A View Forward

With governance still taking shape, leaders need a working framework. Eight variables anchor it.

Four of these variables matter most before deployment. Transparency asks whether stakeholders can reconstruct how the agent reached its decision, through explainability, disclosure, and auditable pathways. Accountability asks who bears responsibility when things go wrong, and how humans intervene and remediate. Bias asks whether the system perpetuates, amplifies, or introduces systematic disadvantage, including through feedback loops where biased outputs reinforce biased inputs. Data privacy asks how the organization protects information that agents access and combine across systems without per-transaction human review. A single workflow may trigger several regulatory regimes at once: HIPAA, GLBA, CCPA/GDPR, bar rules, IRS Circular 230, and trade secret law.

Four more variables matter once deployed, and these are what most differentiate one industry’s challenge from another’s. Decision reversibility sets the upper bound on tolerable error. Stakeholder impact scope determines whether governance must be transactional, with per-decision audits, or systemic, with architecture-level controls. Regulatory prescription shapes the work itself—banking’s SR 11-7 dictates model risk management in detail, while retail has almost no sector-specific AI regulation. Structural systems governability determines how easily governance can be built, whether workflows decompose naturally into discrete, measurable, audit-ready steps, or deliver value through fluid judgment that must be engineered into structure.

By considering these together, we can create a governance diagnostic matrix that generates cross-cutting questions and applied examples for each matrix cell, based on our industry review.